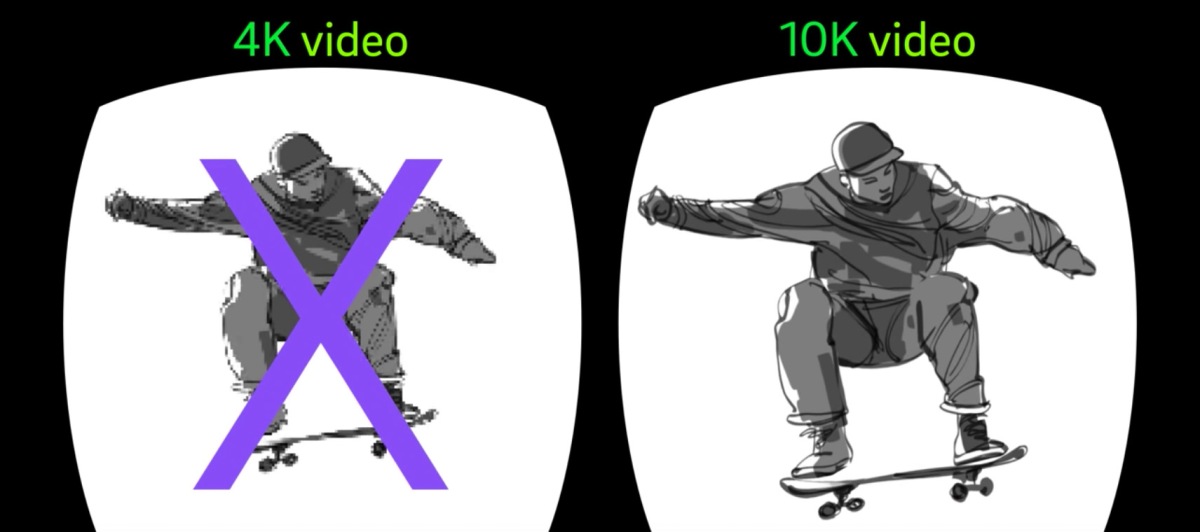

Kudos to Aaron Rhodes and Sean Safreed for the first of many Pixvana videos that outline some of the unique challenges, and solutions, to making great stories and experiences using video in Virtual Reality. This video tackles the unique challenges to working with *really* big video files, on relatively under powered devices and networks. This general approach is something that we think of as “field of view adaptive streaming”, in that unlike traditional adaptive streaming where multiple files are used on the server/cdn to make sure that at any given time, a good video stream is available to the client device… in VR we have to tackle the additional complexity of *where* a viewer is looking within that video. The notion of using “viewports” to break up the stream/video into many smaller, highly optimized for a given FOV, videos, is something we are firing away on at the office these days.

So, should we call this FOVAS for short, for Field of View Adaptive Streaming. ? It is kind of weird, but it makes a lot of sense… i’m using the term regularly, maybe it will stick!

Here’s the video: